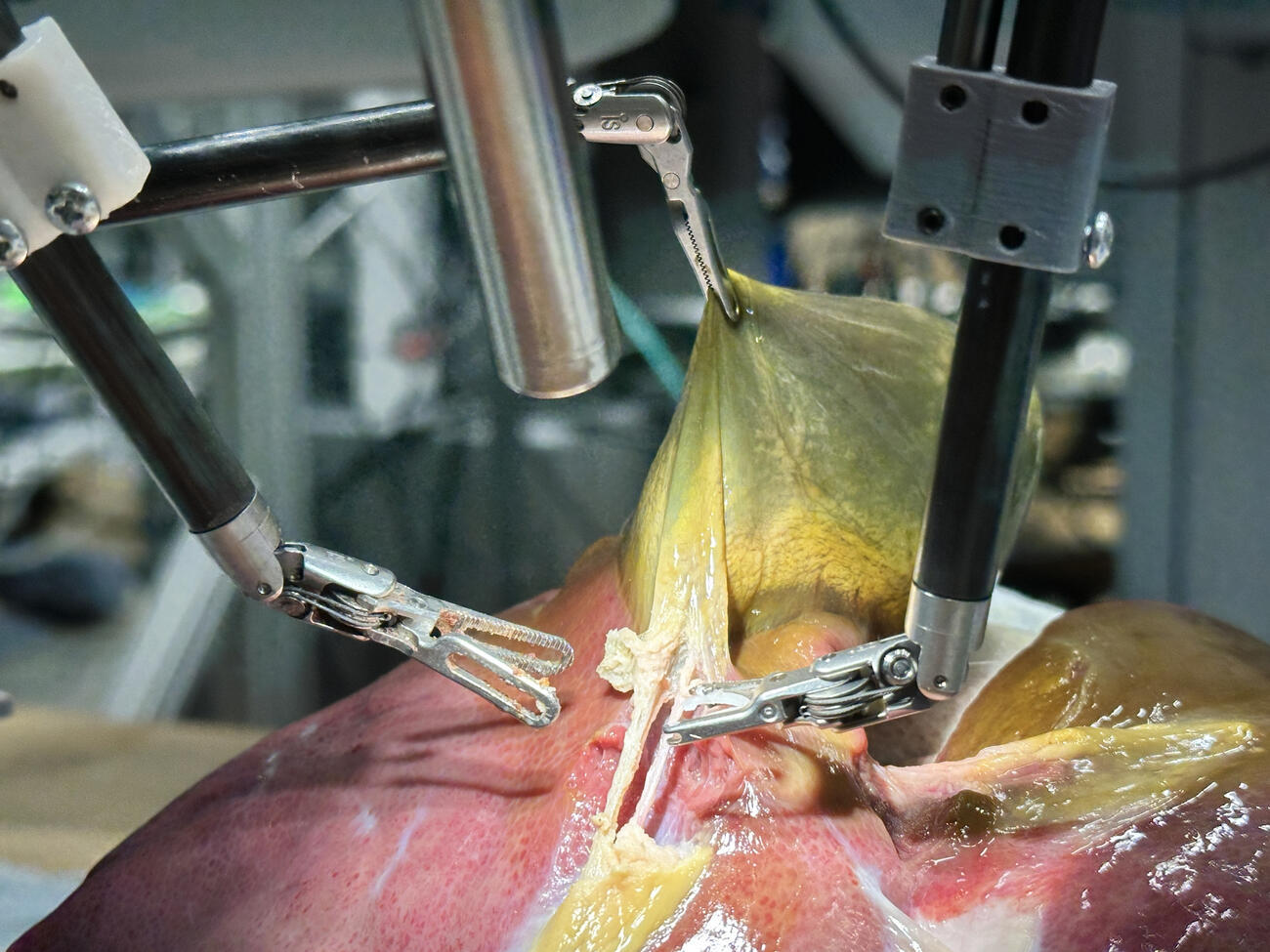

A robot trained on videos of surgeries performed a lengthy phase of a gallbladder removal without human help. The robot operated for the first time on a lifelike patient, and during the operation, responded to and learned from voice commands from the team—like a novice surgeon working with a mentor.

The robot performed unflappably across trials and with the expertise of a skilled human surgeon, even during unexpected scenarios typical in real life medical emergencies.

Care to elaborate why?

From my point of view I don’t see a problem with that. Or let’s say: the potential risks highly depend on the specific setup.

Imagine if the Tesla autopilot without lidar that crashed into things and drove on the sidewalk was actually a scalpel navigating your spleen.

Absolutely stupid example because that kind of assumes medical professionals have the same standard as Elon Musk.

Elon Musk literally owns a medical equipment company that puts chips in peoples brains, nothing is sacred unless we protect it.

Being trained on videos means it has no ability to adapt, improvise, or use knowledge during the surgery.

Edit: However, in the context of this particular robot, it does seem that additional input was given and other training was added in order for it to expand beyond what it was taught through the videos. As the study noted, the surgeries were performed with 100% accuracy. So in this case, I personally don’t have any problems.

I actually don’t think that’s the problem, the problem is that the AI only factors for visible surface level information.

AI don’t have object permanence, once something is out of sight it does not exist.

If you read how they programmed this robot, it seems that it can anticipate things like that. Also keep in mind that this is only designed to do one type of surgery.

I’m cautiously optimist.

I’d still expect human supervision, though.